Selfie ANN

Selfie ANN is an algorithm able to estimate the perception that a set of Entities have of space where they are located in. This estimation allows to predict the diffusion of a process, if the assigned Entities represent a sample of the process itself.

Selfie algorithm learns the position of each Entity in relation to any other. In this way the position of each Entity is learned in a collective and convergent modality by the other Entities.

When a new Entity (point) appears in a specific position, all the trained Entities will try to modify the position of the new point in relation to the logic that they have previously learned (the Hyper Surface computed).

Data organization to implement Selfie ANN:

i,j=assigned points indeces (Entities);

n=the index of the input vector and of the output vector;

p=the index of a generic point.

During the test phase we may have 3 typical cases:

Normal case 1: The new point has the same position of someone of the trained entities: in this case the new position assigned to it will be similar to its original position (according to the training error).

Normal case 2: The new point has different coordinates from any of the trained entities: in this case the new position assigned to it will be different to its original position, in order to maximize the function learned.

Relevant case: The new point has different coordinates from any of the trained entities, but this time its position remains the same. Consequently, this new point belong to the learned function more that the trained Entities. All the new points with different coordinates from any of the trained entities, whose position remain unchanged during the test phase, have to be considered the Hidden Entities of the computed function. These new points belong to the function more than the trained Entities. Consequently, they have to be considered the kernel of the function itself.

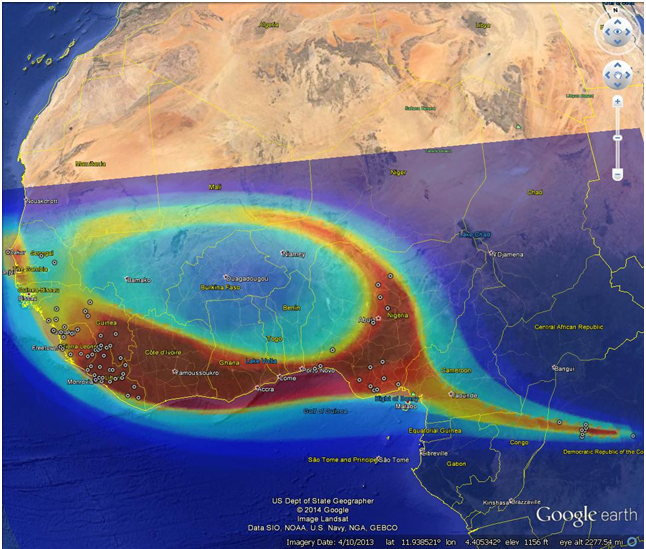

Example : Ebola epidemics (up to October 2014).

Figure: Selfie ANN estimation of Ebola epidemics (Red=diffusion, Circle= Assigned Entities).