Bimodal

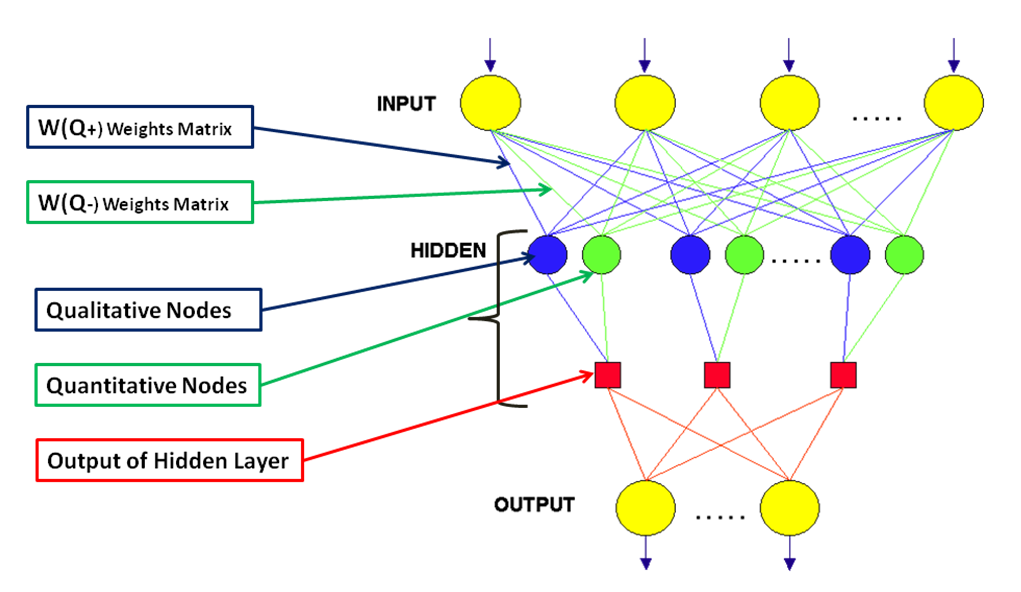

The Bimodal is supervised ANN that encodes the input vector in a complex layer of hidden nodes; each hidden node, then, consists of 2 sub-nodes, each with their own fan-in connections: the first sub-node works according to the technique of gradient descend, while the second one works as vector quantization algorithm (a nuanced form of the algorithm the Winner Takes All). The outputs of the two nodes are fused in a single output value according to a specific algorithm.

The bimodal follows a new learning law.

The bimodal shows an extraordinary capacity for convergence on complex problems. Furthermore, the bimodal provides a good example of how the technique of the gradient (which allows to model the hypersurface of the function by hyperplanes), and the the technique of the vector quantization (which uses radial functions to model the function), can merge in a single algorithm, with obvious technical advantages and increased biological plausibility.

References

Word Document [Still unpublished].